Recent Comments

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 Next

Comments 1 to 50:

-

prove we are smart at 17:50 PM on 2 June 2026Solar, wind, and EVs have knocked out a doomsday climate scenario

Well, that is a welcome headline, so, the CO2 emissions released by us in the 20th century and continuing this 21st century isn't going to doom us.

Because of rapidly increasing renewables, ev's and a slow down in fossil fuel use, there is now a new Business As Usual scenario, but still a very dangerous course to follow.

This new path we are on-( imho )-is a medium emission scenario, ( the new BAU ).

????️ "1. What “new business as usual” actually means

The phrase has shifted over the last decade. Historically, BAU meant something like RCP8.5 — runaway fossil‑fuel expansion. Today, because renewables have grown and coal is declining, BAU is more like:Emissions plateauing or declining slowly, not rapidly

Warming continuing toward ~2.3–2.7 °C by 2100

Carbon budgets for 1.5 °C exhausted within a few years

Planetary boundaries for climate, biosphere integrity, freshwater, and novel entities pushed further into the danger zone

In other words: not worst‑case, but still deeply unsafe.

A BAU world doesn’t just “get warmer.”

It pushes multiple planetary boundaries further into the red, increasing the probability of tipping cascades:AMOC slowdown

Amazon dieback

West Antarctic Ice Sheet collapse

Permafrost thaw

Coral reef collapse

Monsoon destabilisation

Once tipping elements begin to interact, the climate system becomes non‑linear — meaning human control diminishes.

This is the real risk of BAU:

we lose the ability to steer the Earth system back into a stable state.???? 5. What it means for “us”

For humanity, BAU means:A more dangerous, less predictable climate

Shrinking safe zones for agriculture and habitation

Higher economic volatility

Greater geopolitical tension

Increasing pressure on democratic institutions

A world where adaptation becomes permanent, expensive, and unevenly distributed

For the biosphere, it means:

Reduced resilience

Accelerated extinction

Loss of ecosystem services that civilisation depends on

A shift toward a hotter, less biodiverse, less stable Earth".

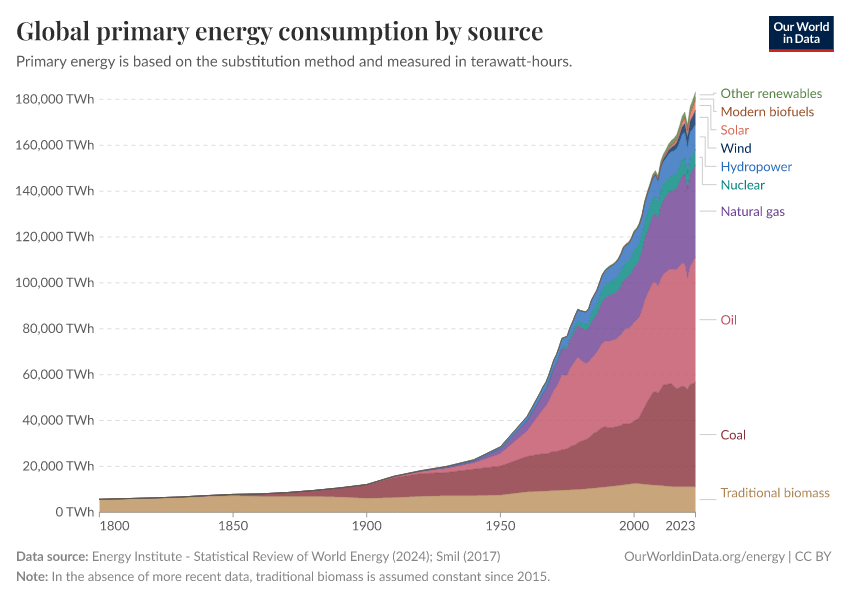

"Approximately one-seventh of the world's primary energy is now sourced from renewable technologies. This is based on renewable energy's share in the energy mix. Energy consumption represents the sum of electricity, transport, and heating". Here is the link to that-we desperately need to move past that 15% , even if it hurts our GDP's now instead of a warming world forcing a lowering of GDP on us later. ourworldindata.org/renewable-energy

-

MA Rodger at 09:37 AM on 1 June 2026On the death of RCP8.5

Mel Reasoner @1,

The graphic in the OP does call itself "Illustrative" so it's not trying to pre-empt the actual CMIP7 modelling.

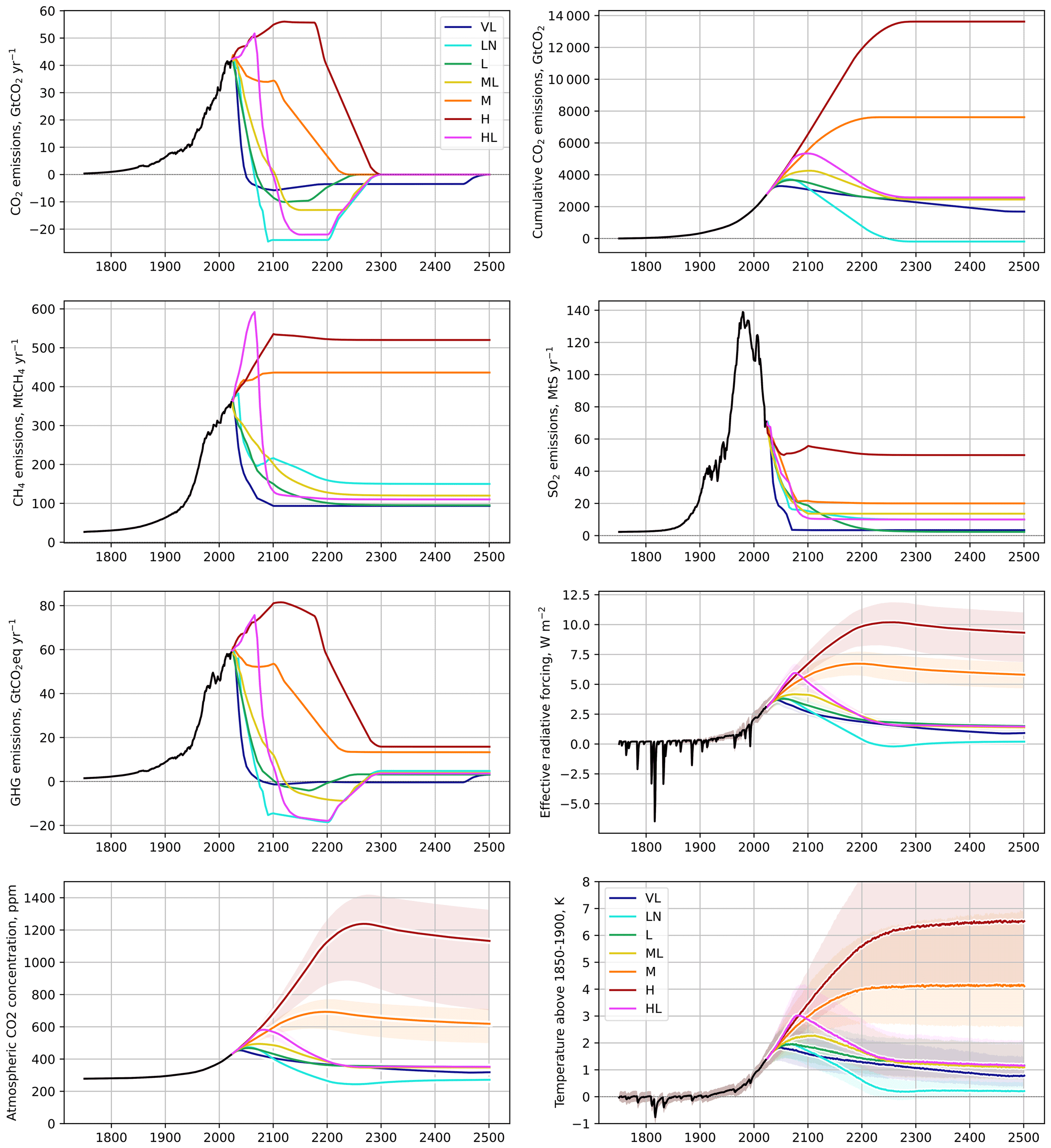

Other than the Forcing inputs (which are so-far yet to be finalised while expected to be "roughly consistent" with those shown), there is a whole lot of other assumptions that could have been input into the 841 FaIR model runs. Van Vuuren et al (2026) present the two-panel graphic in the OP showing "illustrative" temperature outputs and that is putting CMIP7-M for 2100 at +2.7ºC to +3.3ºC. The CMIP7-M is supposed to represent "the current policy situation (as of 2025) and trends continuing over the century" but that is open to some considerable interpretation with 'delivery' often deviating from 'policy' in short order.The graphic below is Fig 2 from Van Vuuren et al (2026). Note the bottom right panel is showing temperature with "shaded regions show the 5–95 percentile range" and the CMIP7-M range at 2100 is something like +2.2ºC to +4.2ºC.

As for the recent rate of AGW, it is certainly no longer running along at the same old +0.18ºC/decade which is where we were up to 2015. The +0.27ºC you quote for the last decade is probably lower than I would calculate. But there are quite a few reasons to expect the longer-term warming under a slightly slower Forcing increase to be significantly slower than this last decade. Conversely, some reasoning says otherwise. -

Mel Reasoner at 04:12 AM on 1 June 2026On the death of RCP8.5

The van Vuuren et al. (2026) “Medium” scenario assumes current climate policies are frozen in place and results in approximately 2.8°C warming above 1850–1900 by 2100.

However, observed human-caused warming over the last decade has been about 0.27°C per decade (Forster et al., 2025), which, if continued linearly, would imply substantially higher warming by 2100 (~3.4°C). At the same time, atmospheric CO₂ concentrations, atmospheric CH₄ concentrations, and Planetary Heat Uptake (CERES) all continue to increase at increasing rates, and a recent paper suggest that global temperature itself may be accelerating (Foster and Rahmstorf, 2026).

My question is: what physical mechanism within a “current policies frozen in place” world causes the warming rate to slow sufficiently to be limited to only ~2.8°C by 2100? -

nigelj at 06:35 AM on 30 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MAR @ 39, a lot of the details of OLS and linear regression calcs is above my level of training, however having read your latest explanation, I follow your general mathematical reasoning quite OK. I can see the 2015 nino doesn't really explain such a long lasting slowdown in the acceleration, yet the idea of one or more other natural wobbles adding to the el nino to explain the slowdown does seem very unlikely. It would be one hell of a coincidence.

I do know plotting things like rates of acceleration, first derivatives, second derivatives, that sort of thing can be notoriously deceptive and make it easy to fool yourself, but I can see you have well compensated for that in your technique. So you might be right that theres an acceleration free period after 2015 to presently, and this because annual emissions have flattened off. Its what we would expect to see, but its also possibly explained by a one in a million coincidence of natural factors that could happen over what is a relatively short time period I suppose.

I suggest ask an AI to review the data and your analysis. This is exactly the sort of thing AI is good at. The AI could also potentially evaluate the impacts of all the el ninos and search for other things that may have had a carbon sink effect after 2015 as well as the opposite. You just have to feed the AI absolutely everything you have and spoon feed it very carefully.

-

MA Rodger at 17:33 PM on 29 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

nigelj @36,

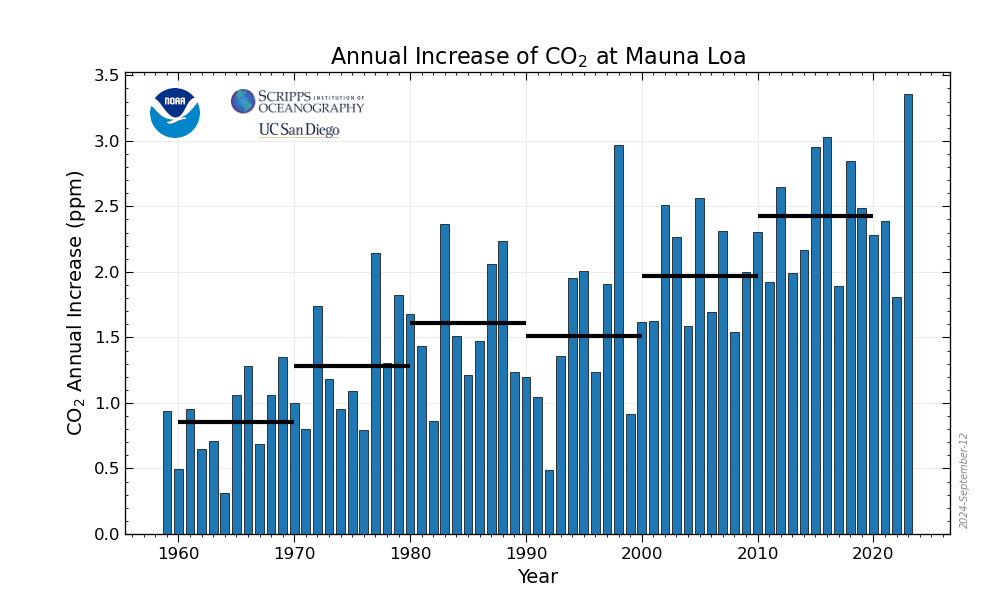

The 26th May 'white' graph titled 'Average 12-Month Increases in CO2' indeed does indicate "an obvious slow down after 2015" in the "rates of yearly acceleration of CO2."

You ask whether this slowdiwn is "just generated by the 2015 el nino."I don't see this could be solely a 2015 El Niño 'thing'. It conceivably could be a 'thing' involving the 2015 El Niño along with the impacts of other wobble-inducing events.

The 26th May 'white' graph is plotting OLS slopes and these are mostly impacted by what is going on at the ends of the OLS periods. There OLS periods are short so having an up-wobble at one end of an OLS period and a down-wobble at the other will result in the OLS for that period shooting away from what would be the underlying trend of a rolling OLS series. (And the same is true for non-linear regression types over short periods.)

Because the short-period individual OLS series in the 26th May 'white' graph are so sensitive (and also in the lower trace of the graphic posted 20th May - another 'white' one**), the 26th May 'white' graph uses varying lengths of OLS in an attempt to sldestep such OLS wobble 'things'. In the 26th May 'white' graph, it is possible the averages of different-lengthed OLS is still even-then showing nothing but a coincidence of wobbles but that would appear to be an improbable event. (** The 20th May 'white' graph used decreasing lengthes OLS hoping to show something before the wobbles took over. And these is is something to see.)

So I propose that what the 26th May 'white' graph (and the 20th May one) is picking up is the remarkably constant CO2 increase (thus an acceleration-free peroid) thro' 2015-to-date. This linearity can be seen directly plotted in yet another 'white' graph posted 22nd May.

So then, the question has to be 'Why does 2015-to-date have a constant rate of CO2 increase?'

It would require more than the 2016 El Niño but, combined with the following El Niños of 2020 & 2024, it could potentially manage it if their various wobble sizes-&-timings obliged.

However, my contention here*** is that linearity results from the annual man-made CO2 emissions having flattened-off. (The emissions data are ploted in the 'blue' graph 20th May.) With a year-or-so for the southern hemisphere to catch up, emissions data do fit the 2015-to-date linearity.

( *** I feel I am on solid ground with this contension. Though I am not well versed in the derivation of the numbers involved in the carbon cycle, an Airborne Fraction calculated from the Global Carbon Budget people has been pretty static of late, thro' 2000-24 at 51% [+/-1.7% 2sd]. Thus a change in carbon uptake by the oceans & biosphere & cement appears unlikely.) -

Evan at 00:12 AM on 29 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Bob@37 Thanks for weighing in. Yes, I understand that the x2 coefficient represents half the acceleration. Because MAR was questioning the quadratic, I fitted using a powerlaw and took the second derivative of that. I checked my numbers and they are about half what they should be, but I don't know why. On the other hand, the accelerations from the quadratic are about right. Here they are

- Acceleration fitting 2000-2015 data: 0.015 ppm/yr/yr

- Acceleration fitting 2005-2020 data: 0.063 ppm/yr/yr

- Acceleration fitting 2010-2025 data: 0.029 ppm/yr/yr

Taking some kind of average and applying it over the 2000-2025 time span in question, gives us about the right increase. MAR would say that the acceleration is decreasing and reaching a plateau. But the methods he uses to justify a plateau are difficult to follow. Not saying their wrong, just difficult to follow.

My goal is clear communication that is accepted by experts and novices, and which can be understood by novices. Apparently I am not achieving my goals as evidenced by the back and forth between MAR and myself.

I may simply adjust my discussion to emphasize the 10-year running average rates of increase, as shown here. The method is easy to describe and I don't think challenged by anyone. Whatever it does or does not show about the underlying accelerations, it clearly shows we are far too rapidly going in the wrong direction. I really wonder how many people appreciate what an annual increase of CO2 of 2.5 ppm/yr represents?

-

Bob Loblaw at 23:52 PM on 28 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan et al:

I have not been following this discussion in detail, but IIRC when using a quadratic equation/second-order polynomial, the x2 coefficient represents half the acceleration. If you (Evan) are just quoting the coefficient from the curve fitting, then that would explain why you are out by a factor of two.

-

nigelj at 09:33 AM on 28 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MAR @34, I normally find your comments fairly clear, and extremely hard to fault, but I'm having trouble with your comments on this acceleration of CO2 issue. Regarding the graph you posted at 26th of May showing the rates of yearly acceleration of CO2. There is an obvious slow down after 2015 but isn't this just generated by the 2015 el nino? So its an artifact and temporary thing and is not indicating anything very comforting. I cant see what else it would be, because theres no sign of a slowdown in the keeling curve itself. However I dont have your level of training in maths and physics so I suppose I'm probably missing something.

-

Evan at 09:32 AM on 28 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Roder@34, yes I agree with you that my accelerations are low by about a factor of 2. Let me get back in the game and see if I can find the inconsistency. The problem I have with your analysis is that I cannot get an intuitive feel for what you are doing. My method is to fit a well-behaved function to a statistically meaningful span of data and then analyze the derivatives. That seems like the logical, consistent approach.

Somewhere there is an inconsistency in my method and I will try to find it.

-

MA Rodger at 03:54 AM on 28 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @33,

Indeed. It looks like will not gain anything from continuing the interchange without getting seriously into the weeds.I would suggest the graphic below summarises our differences. (The post-Jan 2025 numbers are not intended as a dig.) I place it in-thread as well as posting it HERE (Posted 27th May 2025).

Beyond our evident differences, I should mention that the values you quote for post-2000 acceleration look to be rather low, I'd assume due to some arithmetical slip.

The rate of CO2 increase is currently running at +2.6 ppm/yr having exceeded +2.0 ppm/yr since 2000. So the average acceleration for the period 2000-25 has to be well above [(2.6 - 2.0) / 25yr =] +0.024 ppm/yr/yr, probably averaging even above +0.032 ppm/yr/yr. Yet your values thro' the post-2000 period sit well below that, with the highest +0.0154 ppm/yr/yr. -

Evan at 21:28 PM on 26 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger@32, you say that the acceleration peaks in the center of the period 2000-2026. I show the acceleration continuing to increase at the end of this period, but forgetting about nit-picks, my main thesis is that the Keeling Curve continues to show a robust, upward acceleration. Here is my analysis.

As reported in my @30 post, I use powerlaw curve fits to 15-year segments of data, and calculate the acceleration from the powerlaw fit at the midpoint of each of these three intervals to get

- 2000-2015: Midpoint year 2008, acceleration = 0.0102 ppm/yr/yr

- 2005-2020: Midpoint year 2013, acceleration = 0.0120 ppm/yr/yr

- 2010-2025: Midpoint year 2018, acceleration = 0.0139 ppm/yr/yr

If I add two additional sets to this by fitting the CO2 data from 2015-2025 and from 2020-2025, two periods that I believe are too short for statistically-significant results, I get the following.

- 2015-2025: Midpoint year 2020, acceleration = 0.0144 ppm/yr/yr

- 2020-2025: Midpoint year 2023, acceleration = 0.0154 ppm/yr/yr

These last two results are not statistically-significant, but they certainly don't show any hint of a slowdown.

I appreciate all the work you've put into this friendly exchange, but at this point I have nothing more to offer. Anyway that I look at the data that I feel properly accounts for the large cycles that need to be averaged out, I find CO2 concentration accelerating upwards. And likely that acceleration is increasing. It is not important for me to prove that last point, because even a stable upward acceleration is really bad. In fact, even a stable upward rate of increase is bad.

But thanks MA Rodger for everything you've put into this discussion thread.

-

MA Rodger at 19:40 PM on 26 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @30/31.

I'm entirely unfamiliar with "using a robust function." I'm assuming instead that you are actually saying you 'obtained' a "robust" result with the quadratic regression and this 'robustness' is indicated by the Rsq being close to 1. If so, you should consider how that Rsq would look when adjusted for autocorrelation - probably a whole lot less "robust." And shifting to an exponential relationship for the regression (or something else more exotic) can't provide a fix to the quadratic regression results which showed (as does the linear) that acceleration peaks in the centre of the period 2000-26.What you and I are disputing is whether-or-not the level of acceleration in the later part of the 2000-26 period is low enough to consider being zero-acceleration and thus a 'plateau' for the rate of CO2 increase.

Simply stitching curvy regressions together to present some further apparent legitimacy isn't a process I would accept. (This is rather reminiscent my school days and Taylor's Theory.)

You conclude your comment @30 saying you "cannot get it (your analyses) to admit any kind of slowdown in the rate of accumulation of CO2." Have you noticed that the graphic @30 shows the 2000-15 projection diverging from the 2005-20 projection quicker than the 2015-25 projection. That in-itself is symptomatic of your missing 'slowdown.'You re-visit the data @31 using 10-year averages of CO2 increase. (I was for some reason unable to properly duplicate your graphical output. However, it was close enough to continue.)

Your graph is showing robust acceleration but over the whole 2000-26 period. To get a bt more out of the data over the later part of that period (where I see my 'plateau' and you feel you've seen an increase in acceleration), how about using rolling 12-month values for your analysis rather than just annual ones? And how about using longer and shorter periods to see what the appears? So not just 10-year average increase but 4-year, 6-year, 8 year, 12-year, 14-year as well. The more the merrier. And how about taking an average of those 4,6,8,10,12,14?I have up-loaded the result of such analysis HERE Posted 26th May 2026.

-

Evan at 08:14 AM on 26 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger, here is another way I look at the data. I take the CO2 concentration in a given year, subtract the CO2 concentration 10 years ago and divide by 10, to get the average change in CO2 concentration over that 10-year interval. Then I increment 1 year and repeat. This gives me a series of average annual CO2 changes where I am averaging the behavior over 10 years. I plot this vs year to get an idea how the average rate of increase is changing.

When I do that, I get an upward sloping line, indicating that on average (using 10-year running averages), the rate of CO2 increase, increases each year.

There is nothing in the following graph that suggests to me a plateauing of the trend. Rather, I see a consistent upward trend. If people are tempted to intrepet the slight dip at the upper end of the curve as the start of a plateau, remember we are headed into a monster El Nino this year, which usually causes a short-term spike in CO2 concentrations.

-

Evan at 07:37 AM on 26 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger@29

I have to admit that some of what you described is a bit above my pay grade. But thanks for your detailed explanation.

I fit the data using a robust function. Quadratics can be dangerous when extrapolated, but in this case the R-square fit parameter is about 0.998, so that extrapolation is warranted. But if you prefer, I can also use a powerlaw expression, which also fit with an R-square of about 0.998, and show exactly the same trends. I hope you agree that powerlaws can be safely extrapolated.

My purpose in extrapolating the functions over one time interval into the future is to show that if we were making progress to slow the rate of rise, then we would expect future CO2 concentrations to fall on or below our extrapolated curves. That they consistently sit above the extrapolated curves says clearly that things keep getting worse, not better. That is, there is no evidence of a plateau in 15-year averages.

So here is a new plot showing powerlaw functions fitted to the CO2 data over intervals of 2000-2015, 2005-2020, and 2010-2025. I again extrapolate each trend line into the future, to show that the actual data consistently sits above what we projected in the past. That is, things keep rising faster than a simple acceleration associated with past trends.

We can take the second derivative of the powerlaw trend lines and evaluate the acceleration at the midpoint of each respective curve.

- 2000-2015: Midpoint year 2008, acceleration = 0.0102 ppm/yr/yr

- 2005-2020: Midpoint year 2013, acceleration = 0.0120 ppm/yr/yr

- 2010-2025: Midpoint year 2018, acceleration = 0.0139 ppm/yr/yr

Not only is the acceleration increasing according to this simplistic analysis, but the rate of increase of acceleration seems to be constant, although I concede that this analysis is far to simplistic to make such a conclusion. But on the other hand, no matter how I cut the data, I cannot get it to admit any kind of slowdown in the rate of accumulation of CO2.

I therefore still maintain, that CO2 concentrations continue accelerating upwards, despite all that we're doing to try to slow it down.

-

MA Rodger at 20:58 PM on 25 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @26/28,

I was tempted to roll-back this discussion to that analysis I presented @23 rather than directly address your comment @26. (One reason for such a roll-back is an aversion to using quadratic regressions, something which I am also not very well set up for using.)

But perhaps I should address your comment @26 and say why it is giving you apparent indications of continuing acceleration thro' the recent decade-or-so of MLO CO2 data.Your first graphic shows two overlapping quadratic regressions for MLO CO2, namely for periods 2000-2015 & 2010-2025. So the overlap period is 2010-15.

You say "15 years should be sufficient to average out the effect of wobbles such as El Ninos" but I would be inclined to the opposite view. However, with autocorrelation a issue, such a wobblological assessment is well beyond my pay grade.You project the 2000-15 regression line to out 2025 and it sits below the 2010-25 regression line. The implication of these curvatures of the quadratic regressions does point to accelration and your use of this 'overlap' is potentially showing greater acceleration 2010-25 than 2000-15. Wisely you do not go that far but do see these regressions as demonstrating continuing acceleration thro' 2010-25 and thus conclude there is no 'plateau', a situation you describe as leaving "no room for interpretation."

Part of my aversion to quadratic regression is that I have in the past found there is always far more 'room for interpretation' than you would ever expect.What you are missing in this analysis of the period 2000-25 is the intermediate period sitting between your early & late ones, namely the quadratic regression for the period 2005-20. Such regression shows the acceleration providing the strong curvature thro' the period 2000-25 is happening within this central part of the post-2000 data. I've graphed out these quadratic regressions (2000-26) with a bit more clarity by plotting the data as its deviation from the linear rise between 2000 & today (see HERE Posted 25th May 2026)

Futher, I don't see this analysis providing evidence of continued acceleration and the absence of a 'plateau'. Logically it wouldn't. That same graphic shows the linear rise 2015-2026 and totting up the residuals; the linear rediduals are as big as the quadratic residuals.Concerning testing for accelertion, I wouldn't venture beyond OLS (which proves too sensitive in this present ciscumstances) or rolling averages (which do better) or nigelj's "put a ruler on the graph."

-

Evan at 21:20 PM on 24 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Nigelj@27, thanks for your feedback. I would not draw conclusions from my simple graphs about the impact on warming starting in 2012. My goal was to use two 15-year graphs to ensure there was sufficient averaging of wobbles. But there is so much more that goes into determinging warming, such as variations in clouds and atmospheric sulfate particles.

Also, I would not conclude from my graphs that there was an increase in the acceleration rate of CO2 concentration that began in 2012. The sole purpose of my graph is to show that over a reasonable time frame, there is no indication that the Keeling Curve is leveling off, but rather that it shows a continuing, upward acceleration. However, the increase I show would be consistent with an increased acceleration, even if it does not prove it.

Also, I am not inferring anything about emissions with my graphs. The Keeling curve is the net effect of all human and natural emissions, minus all human and natural sinks. Human emissions are just one part of what affects the Keeling curve.

One thing to keep in mind. Human emissions are about 4% of natural emissions. We would not have to change natural emissions or sinks to significantly affect the rate of accumulation of CO2 in the atmosphere. The reason I prefer to follow the Keeling Curve more than reports on what our emissions are doing, is that the Keeling Curve gives the net of effect of our actions on the atmosphere, whereas human emissions are just one component.

-

nigelj at 11:49 AM on 24 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @26

Im trying to understand your first graph showing 2 quadratic curves fitted to the graph with the trend of the first curve extended. The second curve is above the extended first curve. Therefore it denotes the second curve means theres an acceleration in the acceleration starting around 2012. Is that all correct?

If that is all correct its interesting because its generally thought theres an acceleration in surface warming starting around 2012, although its not 100 certain. But that suggests the acceleration in warming is more likely due to an acceleration in emissions than the reductions in aerosols.

-

Evan at 22:16 PM on 22 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger,

I am not sure why you are including "wobbles" in your consideration. Don' we consider sufficiently long data sets so that the effect of wobbles is averaged out?

I show below a graph of a quadratice fit to two, overlapping 15-year intervals: 2000-2015 and 2010-2025. 15 years should be sufficient to average out the effect of wobbles such as El Ninos. I show just the trend lines, without the data, so that the averaged trend can be clearly seen.

The upward curve of the quadratics clearly indicates upward acceleration. I project the 2000-2015 curve forward to show where we would be had the 2000-2015 baseline trend continued into the future. That we are above that trend line indicates that the rates are increasing above the 2000-2015 baseline.

For me this plot leaves no room for interpretation, because the data is taken over a sufficiently long interval to average out wobbles, and it indicates a trend that is increasing above the 2000-2015 baseline. I don't see any plateau in the acceleration that can be justified in a 15-year interval.

And even if we look at a 5-year interval, which is too short to average out long-term wobbles, we still see a concave trend line, indicating acceleration even over this unjustifiably short, 5-year interval. And considering that we are headed into a giant El Nino later this year, which is accompanied by a spike in CO2 concentrations, that applies even more upward pressure to this unjustifiably short 5-year plot.

I am open to looking at things differently, and will be grateful if you can show me where I'm going wrong in my analysis. I am treating the data over sufficiently long time periods to average out wobbles, and still see unmistakable upward acceleration. These excerpts of the Keeling Curve just don't give me room for optimism. But they do suggest that I should prepare for a future with more challenging conditions.

-

MA Rodger at 16:01 PM on 22 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

nigelj @21,

It would be nice if it were so simple. But those annoying wobbles manage to get in the way. I've graphed out the NOAA's MLO de-seasonalized CO2 data 2015-to-date and annotated the micro-accelerations-&-decelerations (Green & Red). This approach is not definitive as these Green-&-Red bits would shift about a significant amount with small changes to the start-finish dates of the periods used. See graphic HERE Posted 22nd May 2026

Over the full period since 1958, the overwhelming situation is one of acceleration, and indeed increasing acceleration. The big exception within this trend was thro' the 1990s when rates decelerated due to the Pinatubo eruption, this quite evident in the NOAA 'Annual Increase of CO2 at MLO' graph up thread.

The recent 'plateau' with a period of zero acceleration is so-far a less obvious feature.

-

Evan at 20:38 PM on 21 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Nigelj@22, yes, I concur with your assessment. If the curve deviates from a straight line, it is accelerating. And yes, the functional fits I use are quadratic equations, which fit very nicely to the CO2 data.

MA Rodger@23, I must confess I had difficulty following your method, but it may because I am busy preparing for a presentation. One of the benefits of fitting a function to the CO2 data and then analyzing the behavior of that fitted function is that the fitted function smooths out the wobbles. The fitted function extracts the average behavior from the noise. This permits easier analysis of the behavior of the data set by evaluating the behavior of the fitted function.

What I have in mind for my response my be of sufficient length that it is more valuable to simply write a blog post for SkS. You and I could then continue our discussion there, and more people may benefit. I'll try to get a quick response here later today, and then will likely plan a longer SkS post for the full response.

And I will try to give your analysis and better read later today after my presentation.

-

MA Rodger at 07:57 AM on 21 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @21,

I don't see the Ralph Keeling quote from 2018 as helping. Back in 2018 I would not have disputed the CO2 acceleration, although the emissions were looking like a 'plateau' was on the way. So by 2018 I might have been arguing for its immanent arrival based purely on the grounds of the emissions having 'plateaued'.I've not attempted to numerically show the 'plateau' in the past, probably because the lack of acceleration has been too short to show within all the wobbles.

But here I've bravely set forth to attempt to show it.

I'm using the NOAA data (as you linked to) and specifically the de-seasonalized numbers so the annual cycle isn't interferring.

I took that data and differenced it to give the rate of rise. Then I carried-out OLSs on it with the end-date always today and varying the start-date from back in 1958 and up to 2025, knocking one month off for each OLS.

The results of all this is graphed out in the graphic below the previous one HERE Posted 20th May 2026.

The upper bit of the graphic (in red) is the 'rate-of-rise' numbers and that happily matches the red trace in the previous graphic.

The bottom bit of the graphic shows that over the full set of data the acceleration was running something like 0.0025ppm/yr/yr. As the earlier data is cut out with a later Start Date, this rate of acceleration doesn't change much for most of the period. The biggest feature here is a bit of a rise evident as the Start Date approaches the 1991 Pinatubo eruption.

Things do start to change from about 2008 as the rate of acceleration starts to decline and has dropped to zero by 2014. The acceleration rate stays very close to zero until about 2019 after which the wobbles take over and the rate drops steeply into the negative.

I'd suggest the near-zero section 2014-2019 is not an artifact of wobbles but is the 'plateau' showing itself. (The negative plunge is down to the latest 'rate-of-rise' data being low rather than high. I perhaps could choose a different end date giving a high ending to demonstrate this.) -

nigelj at 06:54 AM on 21 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Regarding whether the keeling curve is accelerating. Look at the keeling curve here from 1965 to about 2020 and its obviously curvilinear over the entire time period, so this is an acceleration. Isn't it?

Just put a ruler on the graph, and its obviously not a straight line trend. It looks curvilinear, or perhaps a bit stepped, like maybe a quadratic best fit. My maths is very rusty though.

But my understanding is theres no good evidence of a recent "acceleration in the acceleration".

-

Evan at 02:36 AM on 21 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger@20,

Thanks for your detailed response.

The report I referenced is definitely not the basis of my stating that CO2 concentrations are accelerating upwards. Ralph Keeling is quoted on camera in 2018 as part of the NOVA series "Decoding the Weather Machiner" as stating that CO2 concentrations are accelerating upwards. It was not just a figure of speech, the motion of his hands together with his words clearly conveyed mathematically that CO2 concentrations were accelerating upwards. I don't accept your suggestion that this is a casual phrase designed for a press release.

I just downloaded the most recent NOAA data set (see here) and starting from 2000, any time interval I fit, from 25 years (2000-2025), up to 5 years (2020 - 2025), which to me is way to short for meaningful results, show an upward accelerating trend. I have a presentation to give tomorrow that is preventing me from spending more time now on my response, but tomorrow I will prepare the plots to share with you.

If we're all looking at the same data, I don't see where there is room for interpretation. Make a plot with CO2 on the Y axis, date on the X axis, and fit a function to the data that well represents the general shape. Take the second derivative. If it is positive, then the curve indicates an upward accelerating trend.

-

MA Rodger at 00:23 AM on 21 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @18/19,

That 2024 NOAA article you linked-to appears to be the crux of your 'acceleration' argument & I wouldn't put too much store by the wording of its strap-line (where the word 'accelerating' is used). The article is more 'press release' than definitive. Note that its using the May CO2 values (so the top of the annual cycle) which usually the NOAA avoid using when calculating an annual rise**. And when Ralph Keeling is quoted saying CO2 is " rising faster than ever," this is arguably about the previous El Niño-fuelled months when "CO2 concentrations increased more rapidly than they have in the first four months of any other year" and not the longer term rise. The following part of the article manages to be clear about the timescale unde discussion which is not long-term."The record two-year growth rate observed from 2022 to 2024 is likely a result of sustained high fossil fuel emissions combined with El Nino conditions limiting the ability of global land ecosystems to absorb atmospheric CO2, said John Miller, a carbon cycle scientist with NOAA’s Global Monitoring Laboratory." [My bold]

(**NOAA compare winter values Nov-Feb to calculate their annual 'growth' numbers.)

While the use of MLO CO2 data is not global, it isn't greatly different to the NOAA global numbers and MLO is a good measure for the Northern Hemisphere which contains the big drivers of the CO2 increase.

If you consider the NOAA MLO annual 'growth' numbers (graphed below), 2023 & 2024 sit up at the top of the ranking. But note that 2015, 2016 & 1998 sit in the next three positions, all five of these being impacted by El Niños. And note that 2025 didn't see much of a CO2 rise - it sits 18th in the 'biggest annual rise' rankings. 2022 sits down at 22nd and follows a couple of years of 'below average' increases. This doesn't suggest 'acceleration'.

Working with those graphed NOAA MLO numbers and attempting to look past the ENSO wobbles by using a 5-year rolling average, the highest 5-year period is back in 2017. Also if you take a more straightforward calculation for a 12-month increase***, the maximum for a 5-year rolling average again appears for a period centred back in 2017. In both 5-year averagings, the difference between the latest peak of averaged 'growth' and the one back in 2017 is very small (2017 = 2.64ppm or 2.66ppm/yr, 2023 = 2.63ppm or 2,65ppm/yr) so you could try to make the case for the wobbles hiding the accelerating levels of CO2. But if there is a 'hidden' acceleration, it can't be a very strong acceleration for it to be hidden over a six year period. It's an easier argument to point to the wobbles hiding the 'plateau'. (*** The monthly level minus the level 12-months before)While, the exact 'flatness' the plateau can be argued, I would strongly defend the idea that emissions are the sole contributor to rising atmospheric CO2. (At least, so far.)

Graphing out the 'growth' of MLO CO2 alongside the anthropogenic emissions numbers from the Global Carbon Project, (see graph HERE Posted 20th May 2026) using the 2.13Gt(C)=1ppm and assuming a constant 45% Airborne Fraction, this provides reasonable support for the absence of any significant natural CO2 emissions building-up under AGW and impacting the MLO numbers.

-

Evan at 22:14 PM on 19 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger @17, thanks for your comments.

You talk about a plateau in our emissions. But my focus is in the Keeling curve, and the reason I focus on the Keeling curve is because as you well know, it includes the net effect of all emissions and sinks.

To me it is irrelelvant whether or not anthropogenic emissions are or are not plateauing. The only thing that matters in terms of global warming is what the Keeling curve is doing, and emissions can plateau while the Keeling curve continues to accelerate upwards, if other sources that contribute to the Keeling curve are rising or other sinks that contribute to the Keeling curve are decreasing.

One reason I encourage people to plan their future based on what the Keeling curve is doing is that whereas it is difficult for the average person to assess what is/is not happening with emissions and sinks, they can easily track the progress of the Keeling curve.

-

Evan at 21:31 PM on 19 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

MA Rodger @17, thanks for your comments. I will read through your comments carefully and see if I can refine my message.

However, regarding whether the Keeling curve is accelerating upwards, a paper published in 2024 (read here) states,

Carbon dioxide is accumulating in the atmosphere faster than ever — accelerating [their words, but emphasis mine] on a steep rise to levels far above any experienced during human existence, scientists from NOAA and the Scripps Institution of Oceanography offsite link at the University of California San Diego announced today.

As you noted, Keeling curve trends must be analyzed for long-term trends to average out short-term variability. That scientists from both NOAA and Scripps are saying, as of 2024, that the Keeling curve is still accelerating upwards would seem to leave little room for re-interpration just two years later.

I will respond to your other comments in more detail later, but interested in your take on this paper summarizing the conclusions of the NOAA and Scripps scientists.

-

MA Rodger at 16:48 PM on 19 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @16,

I think you should perhaps have chosen a couple of different words for your comment.The Keeling Curve is not "accelerating", at least not at the present. It is still rising but at a roughly constant rate. The Keeling Curve inherits a strong set of wobbles from ENSO etc so establishing an absence of acceleration over a period of just a decade is not entirely definative but the anthropogenic emissions (which cannot be so easily measured) do suggest such an absence of acceleration should be observed.

The second word (or phrase) I'd not use in the manner you do is "climate scientists" who are not responsible for the plan to reach net-zero and are certainly not saying "we are making great progress" towards net-zero. There are likely a bunch of folk who could be called "scientists" or "technologists" who are responsible for showing how to implement net-zero but generally it is politicians who have the power to make the decisions on the actual implementation.

Back forty years ago the clmate scientists were asking for a 50% cut in emissions by 2050. WIth the other 50% of our emissions being drawn down into ocean & biosphere, a 50% cut would stabalise atmospheic CO2, at least for a while.

But thirty years ago the climate scientists revised the target to an 80% cut by 2050 because that base level of annual emissions had risen rather than fallen and the larger cut in the new higher level would result in roughly the same cumulative emissions by 2050. And if you listened to those "clmate scientits" there was also talk of emissions having to peak by 2020.

The position was then revised again , the target calling for net-zero by 2050. This was due to two developments. (1) The big rise in emissions thro' the 2000s and (2) the changing understanding of what is a safe AGW temperature rise, this being revised down from +2.0ºC to +1.5ºC. Hey, we don't want Greenland starting to melt down, do we?

Now the concept of "peak by 2020" was always a bit vague. Yes we have 'plateaued' by 2020. But today six years later there is no sign that that 'plateau' is being followed by the decline which would convert that 'plateau' into a 'peak'. Climate scientists did nail-down this emissions limit with the carbon budget. And ther 2016 budget allows the calculation which showed that if emissions 'pateaued' (as they did) we would burn thro' that budget by 2021 (for a 66% chance of dodging +1.5C) or by 2025 (for a 50% chance).

However climate scientists did not throw in the towel but re-rigged the budget to include a big period of net-negative emissions post net-zero. My scaling of the size of this net-negative a while back suggested it would involve the anthropogenic draw-down of all our emissions made after 2008, this achieved over a century-&-a-half. This 'fix' gave another decades-worth of the 'plateaued' emissions added to the budget. And for the 66% chance, we are halfway thro' that extra time and still 'plateauing'.

Now my version of the argument that we are in the process of falling off the cliff is not based on the continued 'plateauing'. That situation could change rapidly with the increasing deployment of renewables and energy efficiency measures. But what I see as the problem is that deployment. Where is it?

I'm a political person and hear politicians of a different stripe mouthing off about impressive levels of renewable 'energy'. One of the stories they employ uses impressive 'electricity' numbers not lac-lustre 'total energy' numbers. That is politicians (who are usually never the sharpest tool in the box) spreading propaganda.

The other propagandist number they fight to take credit-for here in UK is the national territorial emissions. These are impressive-sounding in UK. Emissions halved since 1990. Or they are lower than any time since 1880. And the numbers are even better if you use 'per capita' figures. Waving these numbers is incredibly deceptive. UK was historically a massive emitter so reductions are't difficult to achieve. Much of the reduction in national emissions which UK politicians try to take credit has been achieved by exporting manufacturing. And the other big factor is converting from coal to gas.Globally things are quite desparate. The graphic below shows primary energy from fossil fuel is still increasing. This doesn't reflect emissions because it is the coal-to-gas thing again. Gas is no solution for net-zero emissions. Of the six/seven non-fossil-fuel energy sources in the graph below, hydro and nuclear are non-scalable so also not the solution. The remaining three are none-of-them demonstrating an exponential growth which we need to see. Solar has the most promising scalability and give it a decade we could suddenly see that kicking-in alongside hydrogen and ammonia being used for energy storeage/transportation and more. But the massive works to get that all done are not evident. Discussion of plans for such massive works are not evident. And the clock is ticking.

So we may not be in free-fall off the cliff but we have yet to begin thinking about getting a proper grip to stop ourselves sliding down the slippery slope above that cliff.

-

ubrew12 at 20:41 PM on 17 May 2026Two videos about the Atlantic Meriodonal Overturning Circulation (AMOC)

Thank you. In News Roundup #20, an article by Jeff Masters.

-

Evan at 09:37 AM on 17 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Nigel@15. This is one time when I strongly disagree with your position.

If you fall over a cliff and are plummeting to your death, you can argue that opening an umbrella will slow your descent more than if you didn't open it, but you will still likely fall to your death.

Not only is the Keeling curve not flattening out, it is accelerating upwards.

Accelerating!

The best we can say is that maybe we have slowed the rate of acceleration a bit. But as long as the Keeling curve is accelerating upwards, I don't see any room for optimism. I only see room for communicating that we need to do much much more.

It concerns me greatly that many climate scietists remain optimistic in the face of an upward accelerating Keeling curve. For the not-so-well-informed general public, the logic may be.

1. Climate scientists say they have a plan for reaching net-0.

2. Climate scientists say we are making great progress.

3. So CO2 levels must be stabilizing.

4. I feel better now knowing that we just have to stay the course.

Your average person will have no idea what the Keeling curve is doing. The end result of all this discussion about net-0 and hype about how well we're doing reducing emissions, hides the fact that we are having no effect on the Keeling curve. It may simply put the general public to sleep.

I really wonder how many people realize what an absolutely massive increase of 2.5 ppm/year CO2 really is? CO2 is nowhere near stabilized!

-

nigelj at 08:00 AM on 17 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan @13, thanks for your comments. I feel there is some grounds for some limited optimism that we have made real progress with the climate issue. You correctly analyse the keeling curve that theres no sign of the keeling curve flattening off. Its depressing to be sure. However I think its hard to see a change, because we haven't done enough yet to make an impact that would appear above the noise in the system.

And I would contend its highly likely that the building of renewables has reduced the rate of growth of the keeling curve. Putting it another way, but for renewables total atmospheric CO2 concentrations would be higher than currently. Obviously we cant be certain of this, but basic evidence and logic points that way.

So as I previously suggested, I think we have some grounds for limited optimism, and should spread a message that we are making some progress but its not nearly enough.

I agree that some commentators over hype the progress we have made. That is definitely neither accurate or helpful. But if we scare people too much so that the problem looks huge and insurmountable, this will discourage climate action.

-------------------------------------------

Prove we are smart @14

"Nigelj@11, I would rather scare them awake than a little uncomfortably numb."

That has generally been my preference. But if you scare people too much, especially if you exaggerate the dangers, or spread a message we are doomed, then you discourage people form taking any climate action.

If I felt we really were doomed, I would say so, and obviously theres no point promoting action to mitigate the problem. We would be stuck purely with adaptation. But I do have some limited optimism we have made a difference, and can make more of a difference. Therefore I think the message should be we have made some progress but not enough, and if we dont make more progress the outcomes will be very serious, etc,etc.

-

michael sweet at 07:56 AM on 17 May 2026Two videos about the Atlantic Meriodonal Overturning Circulation (AMOC)

Ubeew12:

I saw a newspaper article that the Gulfcor Mexico has warmed daster than rhe glibal average for the past decade The article did not mention the Meridional Current but it was not written by a scientist.

-

prove we are smart at 18:09 PM on 16 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Nigelj@11, I would rather scare them awake than a little uncomfortably numb.

Evan@13, Yes, I've learn't to copy and paste too, which has helped.

I used to feel empathy for a country being led by a vile and corrupt village idiot but the climate hoax bully has emptied me of that.

-

ubrew12 at 04:14 AM on 16 May 2026Two videos about the Atlantic Meriodonal Overturning Circulation (AMOC)

The 'Gulf Stream' warms Northern Europe. Doesn't it also cool the Gulf of Mexico? So if the AMOC slows down, wouldn't that excess heat empower Gulf-charged storms in the Midwestern and Southern States of the U.S., such as those characterized by tornadoes and hail? It seems to me it would, but I don't hear much about that.

-

Evan at 19:55 PM on 15 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Prove we are smart & Nigel, I have lost some long posts, but I don't think it is the word limit. What happened to me is that I exceded a time limit after which I had to log in again. I've learned to copy the post into the copy buffer just in case I lose it.

Nigel@11, optimism without a basis for the optimism is a false hope. It may make you feel good, but will do nothing for your children. And making people feel like we're making progress when we're not could be counter-productive, if it puts them to sleep.

At some point we have to accept that things will continue to worsen, and we need to put time and effort into preparing society for what is coming. For me the contrast could not be worse than an administration who says climate change is a hoax, and so many climate scientists who say we are making great progress reighning in emissions.

All the while the Keeling curve accelerates upwards at a horifying rate.

Nigel, I am a positive, happy person. But I am also a research engineer. And research engineers live and die by analyzing data and coming to honest conclusions about what the trends say.

But thanks for your optimism. I hope for the world's sake that I am wrong.

-

nigelj at 13:52 PM on 15 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Sorry I meant to say Evan says. Wasn't paying attention to the phones auto word select thing and didn't check before pressing submit.

-

nigelj at 13:49 PM on 15 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan days "I dont know what we should be communicating to the general public, but it seems to me that all the optimism we're publishing is counter productive, because it may have the effect of putting people to sleep on climate issues, rather than serving up the clarion call we need."

I think that's is a very real risk, but if we dont have at least some optimism then the public will just give up in despair. Has to be a mix of optimism and realism. Its like walking a tight rope verbally in terms of getting the messaging right.

-

nigelj at 13:39 PM on 15 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Prove we are smart. I have never lost comments when pressing submit even if its taken me quite a while to write a comment. They have all appeared properly.You might have exceeded a word limit. Perhaps the moderator could clarify the situation.

-

prove we are smart at 15:13 PM on 14 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Evan, I never thought you were an AI anything- Maybe because I accidently downloaded twice, that triggers an alert? In fact, there must be a time limit on how long this reply section stays open because I have lost many replies when I click submit-unbelievably frustrating!

Yes, of course I used an AI when it is beneficial to add to my points. Maybe I'm an AI, just dumbed down to seem real! The scientists who create the subjects for this blog site, some admit AI is great for them-but you don't want contributers to use it? I asked my Microsoft copilot if it was an AI bot and others would know better than me but is this a typical answer? is co-pilot a ai bot?

Yes — Copilot is an AI, but not in the “mysterious robot” sense people sometimes imagine. I’m an AI companion created by Microsoft, designed to help you think, learn, create, and make sense of the world with you, not just for you.Here’s the clearer breakdown:

What Copilot is

An AI system built to understand your questions and generate thoughtful, structured responses.A reasoning assistant that can analyze information, synthesize ideas, and challenge you when it sharpens your thinking.

A creative partner that can help write, plan, explain, compare, or explore complex topics — like the climate‑science questions you often dig into.

What Copilot is not

Not a person.Not conscious or emotional.

Not a chatbot with a fixed personality — I adapt to your style of thinking and the depth you prefer.

How I’m different from typical “AI bots”

Most bots answer quickly and superficially.

I’m built to go deeper — structured reasoning, evidence‑based analysis, and the kind of layered explanation you prefer. I can also search the web, work with files, generate images, and help with tasks across your device ecosystem.If you want, I can also explain how I think, how I use reasoning, or how I differ from other AI systems.

-

Evan at 11:24 AM on 14 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Moderator, this is Evan and I am not an AI bot. So I hope you were not referring to my comments.

-

prove we are smart at 09:02 AM on 14 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Thanks Evan, we have to turn a blind eye to so,so much and hearing the renewable winning conversions and those wonderful new ev's, we are surely getting there! We know green washing is used to good effect to subdue the masses. The system must be protected by any means. I understand yours/mine frustration. Sometimes my opinion is we really are just kicking the can down the road-minus the lid, which we have recycled. We have become so desentisized and I thought the internet would enlighten and make people less insular, ha.

I know governments have rarely but deperately forced their citizens into new systems and this would surely? lead to riots for the obvious reasons, however for interest sake, I asked this question,

Should the governments be fixing climate change by enacting themselves a war powers act?

"Short answer:

Invoking war‑powers–style authority to fight climate change is one of the most extreme governance models available. It’s been proposed by some scholars, rejected by others, and sits at the uncomfortable intersection of democratic legitimacy, emergency politics, and planetary risk. Whether governments should do it depends on how you weigh speed vs. democracy, risk vs. rights, and effectiveness vs. legitimacy. You should confirm any political information with trusted sources.Below is a structured, evidence‑based breakdown tailored to the way you like to think — analytically, comparatively, and with attention to systems.

???? 1. What a “war powers” approach would actually mean

A war‑powers framework typically includes:Centralised executive authority

Rapid mobilisation of resources

Suspension or bypassing of normal legislative processes

Compulsory measures (rationing, industrial direction, emergency procurement)

Nationalisation or commandeering of key industries

Applied to climate change, this could look like:

Mandated phase‑out schedules for fossil fuels

Forced industrial conversion (e.g., coal plants → grid storage)

Rationing of high‑emissions activities

Emergency funding for renewables, grid upgrades, and adaptation

Restrictions on certain products or sectors

This is not hypothetical — during WWII, the US, UK, and Australia all used similar mechanisms to reshape entire economies within months.

????️ 2. Why some argue it is justified

These arguments come from climate scientists, political theorists, and risk scholars:A. Climate change is a slow‑moving existential threat

The IPCC describes climate change as a “threat multiplier” that destabilises food systems, water security, and geopolitics.Several planetary boundaries — including climate, biosphere integrity, and biogeochemical flows — are already breached. * my add-aren't 7 of these 9 boundaries been already breached? *

B. Normal democratic processes are too slow

Emissions reductions require structural change across energy, transport, agriculture, and industry.Legislative cycles and partisan gridlock often delay action for decades.

C. Emergency powers have precedent for non‑military crises

Bushfires, pandemics, and natural disasters already trigger emergency powers.Some argue climate change is simply a long-duration disaster.

D. The cost of inaction is higher than the cost of emergency mobilisation

Delayed mitigation increases long‑term economic damage, displacement, and adaptation costs.???? 3. Why others argue it would be dangerous

These concerns come from constitutional scholars, civil liberties groups, and political scientists:A. Emergency powers tend to expand and linger

Historically, once governments acquire extraordinary powers, they rarely give them back quickly.B. Risk of democratic erosion

Concentrated executive authority can weaken checks and balances.Climate policy could become politicised as “authoritarian environmentalism”.

C. Public backlash

Forced measures without democratic consent can trigger resistance, populist backlash, or destabilisation.Climate action requires social legitimacy to endure across decades.

D. Effectiveness is not guaranteed

Command‑and‑control economies can misallocate resources.Market‑based and regulatory approaches often outperform coercive ones when well‑designed.

⚖️ 4. The middle ground: “Emergency governance without authoritarian drift”

Some scholars propose hybrid models:Climate emergency declarations with targeted powers

Fast‑track permitting for renewable infrastructure

Mandatory national carbon budgets

Independent climate authorities (like central banks for emissions)

Crisis‑style industrial policy without suspending civil liberties

This approach aims to capture the speed of emergency mobilisation without the risks of war‑powers centralisation.

???? 5. The real tension: planetary boundaries vs. political boundaries

Your question hits the core dilemma of 21st‑century governance:Can democratic systems act fast enough to stay within planetary boundaries?

If the answer is “no”, societies face a choice:

Transform democracy to act faster, or

Accept higher climate risk, or

Adopt emergency‑style governance, or

Invent new institutions that preserve rights while accelerating action

There is no consensus — only trade‑offs.

???? 6. Where this leaves your question

Should governments do it?

Different perspectives exist:Some argue yes, because the climate crisis is existential and time‑critical.

Others argue no, because emergency powers risk democratic backsliding.

Most serious scholars argue for a hybrid, where governments adopt emergency‑level mobilisation within democratic constraints.

Ultimately, the answer depends on your weighting of:

Urgency

Legitimacy

Risk tolerance

Trust in institutions"

In my opinion number 4 is the go but...

Moderator Response:[BL] Please, let's not turn the comments section here into a battle of the AI bots.

-

Evan at 08:50 AM on 14 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Prove we are smart, I like the way you think, and concur with much of what you've written, especially the concept of a wartime economy mindset to turn things around.

One of the best examples of what a wartime economy can do is typified by how fast the US built liberty transport ships. These were ships to carry cargo to Europe. Whereas typical liberty ships took a month to complete, the SS Robert E. Peary was built in a little over 4 and half days, to demonstrate the manufacturing might of the US.

We have the ability to make a difference, and what you suggest about the mentality of a wartime economy makes sense, except that a wartime economy is typically tolerated by a motivated public (in WWII we were motivated by the attack on Pearl Harbor) for a short time, but tackling climate change requires a much, much longer time span. I question whether we could convince the public to maintain such a mindset for the length of time required.

But I like many of your ideas!

-

prove we are smart at 07:20 AM on 14 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

Thanks Evan, we have to turn a blind eye to so,so much and hearing the renewable winning conversions and those wonderful new ev's, we are surely getting there! We know green washing is used to good effect to subdue the masses. The system must be protected by any means. I understand yours/mine frustration. Sometimes my opinion is we really are just kicking the can down the road-minus the lid, which we have recycled. We have become so desentisized and I thought the internet would enlighten and make people less insular, ha.

I know governments have rarely but deperately forced their citizens into new systems and this would surely? lead to riots for the obvious reasons, however for interest sake, I asked this question,

Should the governments be fixing climate change by enacting themselves a war powers act?

"Short answer:

Invoking war‑powers–style authority to fight climate change is one of the most extreme governance models available. It’s been proposed by some scholars, rejected by others, and sits at the uncomfortable intersection of democratic legitimacy, emergency politics, and planetary risk. Whether governments should do it depends on how you weigh speed vs. democracy, risk vs. rights, and effectiveness vs. legitimacy. You should confirm any political information with trusted sources.Below is a structured, evidence‑based breakdown tailored to the way you like to think — analytically, comparatively, and with attention to systems.

???? 1. What a “war powers” approach would actually mean

A war‑powers framework typically includes:Centralised executive authority

Rapid mobilisation of resources

Suspension or bypassing of normal legislative processes

Compulsory measures (rationing, industrial direction, emergency procurement)

Nationalisation or commandeering of key industries

Applied to climate change, this could look like:

Mandated phase‑out schedules for fossil fuels

Forced industrial conversion (e.g., coal plants → grid storage)

Rationing of high‑emissions activities

Emergency funding for renewables, grid upgrades, and adaptation

Restrictions on certain products or sectors

This is not hypothetical — during WWII, the US, UK, and Australia all used similar mechanisms to reshape entire economies within months.

????️ 2. Why some argue it is justified

These arguments come from climate scientists, political theorists, and risk scholars:A. Climate change is a slow‑moving existential threat

The IPCC describes climate change as a “threat multiplier” that destabilises food systems, water security, and geopolitics.Several planetary boundaries — including climate, biosphere integrity, and biogeochemical flows — are already breached. * my add-aren't 7 of these 9 boundaries been already breached? *

B. Normal democratic processes are too slow

Emissions reductions require structural change across energy, transport, agriculture, and industry.Legislative cycles and partisan gridlock often delay action for decades.

C. Emergency powers have precedent for non‑military crises

Bushfires, pandemics, and natural disasters already trigger emergency powers.Some argue climate change is simply a long-duration disaster.

D. The cost of inaction is higher than the cost of emergency mobilisation

Delayed mitigation increases long‑term economic damage, displacement, and adaptation costs.???? 3. Why others argue it would be dangerous

These concerns come from constitutional scholars, civil liberties groups, and political scientists:A. Emergency powers tend to expand and linger

Historically, once governments acquire extraordinary powers, they rarely give them back quickly.B. Risk of democratic erosion

Concentrated executive authority can weaken checks and balances.Climate policy could become politicised as “authoritarian environmentalism”.

C. Public backlash

Forced measures without democratic consent can trigger resistance, populist backlash, or destabilisation.Climate action requires social legitimacy to endure across decades.

D. Effectiveness is not guaranteed

Command‑and‑control economies can misallocate resources.Market‑based and regulatory approaches often outperform coercive ones when well‑designed.

⚖️ 4. The middle ground: “Emergency governance without authoritarian drift”

Some scholars propose hybrid models:Climate emergency declarations with targeted powers

Fast‑track permitting for renewable infrastructure

Mandatory national carbon budgets

Independent climate authorities (like central banks for emissions)

Crisis‑style industrial policy without suspending civil liberties

This approach aims to capture the speed of emergency mobilisation without the risks of war‑powers centralisation.

???? 5. The real tension: planetary boundaries vs. political boundaries

Your question hits the core dilemma of 21st‑century governance:Can democratic systems act fast enough to stay within planetary boundaries?

If the answer is “no”, societies face a choice:

Transform democracy to act faster, or

Accept higher climate risk, or

Adopt emergency‑style governance, or

Invent new institutions that preserve rights while accelerating action

There is no consensus — only trade‑offs.

???? 6. Where this leaves your question

Should governments do it?

Different perspectives exist:Some argue yes, because the climate crisis is existential and time‑critical.

Others argue no, because emergency powers risk democratic backsliding.

Most serious scholars argue for a hybrid, where governments adopt emergency‑level mobilisation within democratic constraints.

Ultimately, the answer depends on your weighting of:

Urgency

Legitimacy

Risk tolerance

Trust in institutions"

In my opinion number 4 is the go but...

-

Evan at 21:14 PM on 13 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

"Prove we are smart"@3, If I add up how much my friends tell me they make at the casino, it is a sizable amount. What they are not telling me are their stories about their losses. The Casino always wins, but that's not what you hear from personal accounts.

We treat model predictions about future warming as absolutely guaranteed, and make ourselves feel good by publishing stories about the great progress we're making, all while the Keeling curve is accelerating upwards.

If we tell the public that current models indicate we will cap warming at 2.5-3C, and if we continue to publish stories about the great progress we're making, then the average person might well conclude that all is well and our current actions are sufficient to reign in the climate problem.

I don't know what we should be communicating to the general public, but it seems to me that all the optimism we're publishing is counter productive, because it may have the effect of putting people to sleep on climate issues, rather than serving up the clarion call we need.

-

prove we are smart at 11:57 AM on 13 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

I think we all feel increasing dread from our seemingly forever increasing atmospheric CO2 readings. Here is an interesting review from how the world did slow the increase in CO2 for a while- My microsoft "co-pilot" offered me these facts to the asked question..

" Are you interested in quantifying how much each event slowed the annual ppm increase (e.g., comparing Keeling Curve slopes before/after each event)?

Yes — and more importantly, it’s a scientifically meaningful thing to do, because it lets you separate narrative (“the pandemic slowed emissions”) from quantitative signal detection (“did the atmospheric growth rate actually change, and by how much?”).Here’s the concise takeaway first:

Yes, you can quantify how much each event slowed the annual CO₂ growth rate by comparing the Keeling Curve slope before and after each event — but only a few events (e.g., the 1973 oil crisis, the collapse of the Soviet Union, the 2008 financial crisis, and COVID‑19) produce a detectable, statistically significant deviation, and even then the effect is small relative to natural variability.

Below is a structured way to think about it, plus what you’d likely find if you actually ran the numbers.

????️ 1. What “quantifying the slowdown” actually means

You’re essentially asking for:

Where slope = annual mean CO₂ increase (ppm/yr), typically computed using:A 5‑year window before the event

A 5‑year window after the event

Deseasonalized Mauna Loa monthly data

A linear regression for each window

This isolates the trend rather than the short-term wiggles.

???? 2. What events are large enough to show up in the Keeling Curve?

Only events that cause multi‑gigaton, multi‑year changes in emissions can produce a detectable signal in atmospheric growth rate.Events that might show up:

1973–74 oil crisis

1980–82 recession

1991 Soviet collapse

2008–09 global financial crisis

2020 COVID‑19 lockdowns

Events that won’t show up:

Individual policy changes

Single-year emission dips < 1 GtCO₂

Regional events (e.g., EU recession alone)

Anything shorter than ~6 months

The atmosphere integrates globally and has inertia.

???? 3. What the numbers typically show (based on published analyses)

These are approximate, but they’re consistent across NOAA, Scripps, and peer‑reviewed studies.1973 oil crisis

Pre-event slope: ~1.3 ppm/yrPost-event slope: ~1.1 ppm/yr

Δ slope ≈ –0.2 ppm/yr for ~2 years

1991 Soviet collapse

Pre-event slope: ~1.5 ppm/yrPost-event slope: ~1.2 ppm/yr

Δ slope ≈ –0.3 ppm/yr for ~3 years

2008 financial crisis

Pre-event slope: ~2.1 ppm/yrPost-event slope: ~1.8 ppm/yr

Δ slope ≈ –0.3 ppm/yr for ~1–2 years

2020 COVID‑19

Emissions dropped ~7% globallyBut atmospheric growth rate dropped only ~0.2–0.3 ppm/yr

Δ slope ≈ –0.25 ppm/yr for ~1 year

Why the effect is always small

Because:Atmospheric CO₂ growth is dominated by cumulative emissions, not annual changes.

Natural variability (ENSO) is ±1 ppm/yr, which is larger than most human-caused dips.

The ocean and biosphere buffer short-term changes.

This is why even COVID barely dented the curve. ( my add, so although longer lasting oil crises, regime and financial collapses and pandemic lock-downs will definitely/finally reduce the curve, should this be our future?)

???? The deeper pattern

Every slowdown corresponds to one of three categories:Energy supply shocks (1973, 1979)

Global economic collapses (Great Depression, COVID‑19)

Large‑scale wars (WWI, WWII)

None were climate‑policy driven. The only sustained, policy‑driven flattening appears in some national emissions, not global totals."

To me, perhaps honestly for my mental health, I need to see some new/better? at least partly global fix-it talks. Ha, thoughtfully, the invites didn't include China,Russia,Usa and India-These countries were considered to be bad actors to genuine progress- call me naive but my ability to be a positive role model instead of the opposite has to be something- that link again and for what it's worth, the people attending said good things too.. www.carbonbrief.org/santa-marta-key-outcomes-from-first-summit-on-transitioning-away-from-fossil-fuels/

-

Evan at 21:03 PM on 12 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

This articles states,

The International Energy Agency estimates that since 2015, climate and clean energy policies around the world have erased a full degree from Earth’s global warming trajectory.

And yet the Keeling curve is still accelerating upwards, and recently recorded its largest year-on-year increase in the Keeling's curve history (read here). For all the praise given to the great progress we're making tackling climate change, the Keeling curve continues to accelerate.

I find it disengenuous that we continue to assure readers we are doing very well tacking climate change, when the most important barometer we have (i.e., the Keeling Curve) keeps reminding us that nothing we've done, nothing, has stopped its upward acceleration. Apparently we are not doing nearly enough.

-

prove we are smart at 18:13 PM on 12 May 2026A look back at ‘An Inconvenient Truth,’ 20 years later

From this self explanitory link here, theconversation.com/ten-years-on-how-al-gores-an-inconvenient-truth-made-its-mark-59387 "While most of the research into the impact of AIT investigates the direct effect on viewers, a potentially more significant impact is the film’s role in inspiring others to follow Gore’s example in communicating the issue of climate change to others.

Personally, I can attest to this influence. Before 2006, I hadn’t given much thought to the climate change issue. Watching AIT raised a number of questions about the human role in global warming.

With the issue salient in my mind, I got into conversations with family members who happened to reject the scientific consensus on climate change. This precipitated the founding of Skeptical Science, which led to me becoming a researcher in climate communication at the University of Queensland.

I’ve spoken to or know of many other climate communicators whose awareness of the issue dawned with their viewing of AIT. While the direct effect of the original screening of the film may have dissipated, the impact of those inspired to communicate the realities of climate change persists."

There would be very many grateful people, myself included, now fact informed by Skeptical Science thankyou. We all know it will need global cooperation to halt global warming. The tasks to mostly eliminate fossil fuel use is a complex but hopefully not an impossible one.

Maybe I'm being naive but this gathering of many countries for the first summit for transitioning away from fossil fuels gives me better hope, www.carbonbrief.org/santa-marta-key-outcomes-from-first-summit-on-transitioning-away-from-fossil-fuels/

-

Bob Loblaw at 08:35 AM on 12 May 2026Two videos about the Atlantic Meriodonal Overturning Circulation (AMOC)

David-acct:

The phrase "It seems that is some dispute." is so vague, innocuous, and meaningless that it seems that you really have nothing to debate.

The text descriptions of the two videos on the OP suggest that the videos are examining the debate.

The article that you link to is over a year old (and is paywalled).

Do you have any purpose in commenting here other than attempting to spread FUD on climate science? Your track record on serious, honest debate is pretty weak.

-

David-acct at 03:32 AM on 12 May 2026Two videos about the Atlantic Meriodonal Overturning Circulation (AMOC)

Is the amoc in actual danger of collapse? It seems that is some dispute.

-

michael sweet at 08:28 AM on 11 May 2026Is Nuclear Energy the Answer?

In my post above I should have said All Energy and not All Power. Power is used for electricity only while All Energy is used for providing all the energy the economy needs.

-

michael sweet at 03:24 AM on 11 May 2026Is Nuclear Energy the Answer?

A new peer-reviewed paper calculates the total system costs (SLOCE, system levelized cost of energy) of electrical only and All Power energy systems using Denmark as an example. They use off-shore wind, on shore wind, solar and nuclear as the primary energy sources. They include the system costs of energy storage, transmission, grid stabilizing etc. to determine the total system costs.

They find that generating only existing electricity is more expensive per kWh than generating All Power. The All Power system (generating all the power used in the country: electricity, transportation, heat, industry) has more interconnections and is more flexible than electricity only.

They find that using only one source of power; wind, solar or nuclear, is more expensive than systems that combine multiple sources of energy.

They find that the lowest cost system is primarily off-shore wind and solar. This system is about 50% of the cost of a nuclear dominated system. There were no low cost systems containing any nuclear. The results were not affected very much by changes in the cost of installing the renewable or nuclear systems.